Embodied Foundation Model

Our proprietary vision-language-action model lets L-1 understand natural language instructions and map them to physical movements in real time.

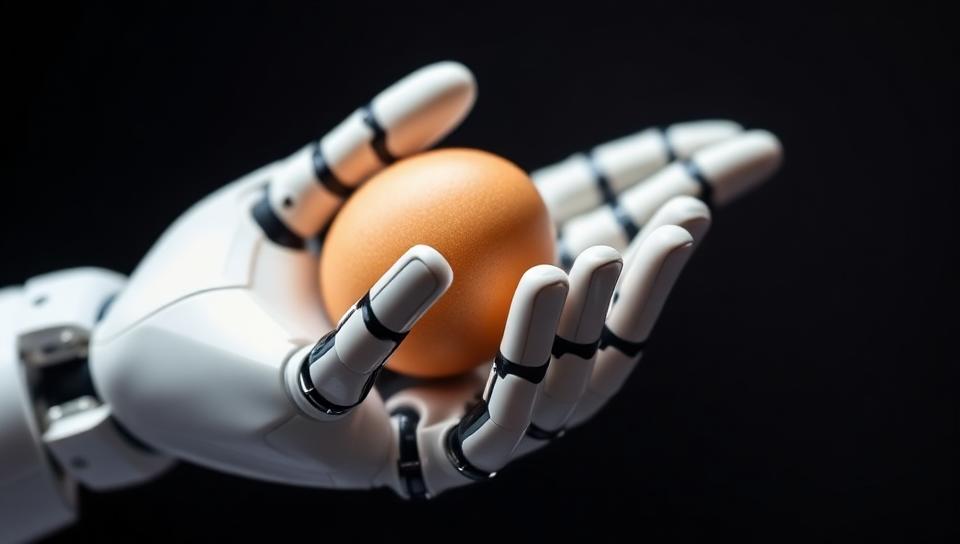

Lemon Robotics develops general-purpose hardware capable of autonomous navigation and complex manipulation. Our first model, L-1, is designed to assist with daily tasks using human-like dexterity.

Built for reasoning and action.

Our proprietary vision-language-action model lets L-1 understand natural language instructions and map them to physical movements in real time.

Custom actuators and tactile sensors enable handling of delicate objects — from glassware to laundry — with sub-millimeter accuracy.

Based in Delaware, Lemon Robotics is a team of researchers and engineers from leading AI labs and hardware manufacturers. We are focused on solving the fundamental challenges of bringing intelligence into the physical world.